Google’s chatbot panic

On the infinite insecurities of a self-styled creative genius who really just buys other people’s ideas.

This is the last day (Feb 17) of my Australian tour for my book Chokepoint Capitalism with my co-author, Rebecca Giblin. We’ll be in Canberra at the Australian Digital Alliance Copyright Forum.

The really remarkable thing isn’t just that Microsoft has decided that the future of search isn’t links to relevant materials, but instead lengthy, florid paragraphs written by a chatbot who happens to be a habitual liar — even more remarkable is that Google agrees.

Microsoft has nothing to lose. It’s spent billions on Bing, a search-engine no one voluntarily uses. Might as well try something so stupid it might just work. But why is Google, a monopolist who has a 90+% share of search worldwide, jumping off the same bridge as Microsoft?

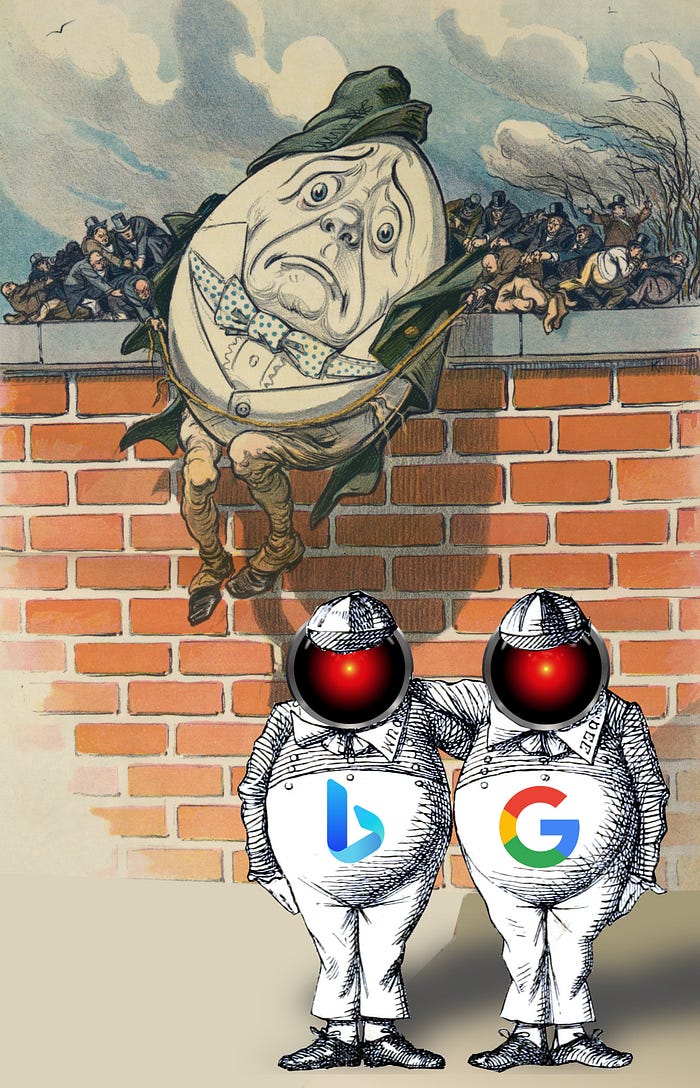

There’s a delightful Mastodon thread about this, written by Dan Hon, where he compares the chatbot-enshittified front ends to Bing and Google to Tweedledee and Tweedledum:

https://mamot.fr/@danhon@dan.mastohon.com/109832788458972865

“At the front of the house, Alice found two curious characters, both search engines.

“‘I am Googl-E,’ said the one plastered in advertisements.

“‘And I am Bingle-Dum,’ said the other, who was the smaller of the two, and sported a pout, as to having fewer visitors and opportunity for conversation than the other.

“‘I know you,’ said Alice. ‘Are you to present me with a puzzle? Perhaps one of you tells the truth and the other lies?’

“‘Oh no,’ said Bingle-Dum.

“‘We both lie,’ added Googl-E.”

It just keeps getting better:

“‘This is truly an intolerable situation. If you both lie,’

“ — ‘And lie convincingly,’ added Bingle-Dum —

“‘Yes, thank you. If that is so, then how am I to ever trust either of you?’

“Googl-E and Bingle-Dum turned to face each other and shrugged.”

Chatbot search is a terrible idea, especially in an era in which the web is likely to fill up with vast mountains of AI bullshit, the frozen gabble of stochastic parrots:

https://dl.acm.org/doi/10.1145/3442188.3445922

Google’s chatbot strategy shouldn’t be adding more madlibs to the internet — rather, they should be figuring out how to exclude (or, at a minimum, fact-check) the confident nonsense of the spammers and SEO creeps.

And yet, Google is going all-in on chatbots, with the company CEO ordering an all-hands scramble to cram chatbots into every part of the googleverse. Why on earth is the company racing Microsoft to see who can be first to leap off the peak of inflated expectations?

https://en.wikipedia.org/wiki/Gartner_hype_cycle

I just published a theory in The Atlantic, under the title “How Google Ran Out of Ideas,” where I turn to competition theory to explain Google’s sweaty insecurity, an anxiety complex that the company has been plagued by nearly since its inception:

https://www.theatlantic.com/ideas/archive/2023/02/google-ai-chatbots-microsoft-bing-chatgpt/673052/

The core theory: a quarter of a century, the Google founders had one amazing idea — a better way to do search. The capital markets showered the company in money, and it hired the very best, brightest, most creative people it could find, but then it created a corporate culture that was incapable of capitalizing on their ideas.

Every single product Google made internally — except for its Hotmail clone — died. Some of those products were good, some were terrible, but it didn’t matter. Google — a company that cultivated the ballpit-in-the-lobby whimsy of a Willy Wonka factory — couldn’t “innovate” at all.

Every successful Google product except search and gmail is an acquisition: mobile, ad-tech, videos, server management, docs, calendaring, maps, you name it. The company desperately wants to be a “making things” company, but it’s actually a “buying things” company. Sure, it’s good at operationalizing and scaling products, but that’s table-stakes for any monopolist:

https://www.eff.org/deeplinks/2020/06/technical-excellence-and-scale

The cognitive dissonance of a self-styled “creative genius” whose true genius is spending other people’s money to buy other people’s products and take credit for them drives people to do truly bonkers thing (as any Twitter user can attest).

Google has long exhibited this pathology. In the mid-2000s — after Google chased Yahoo into China and started censoring its search-results and collaborating on state surveillance — we used to say that the way to get Google to do something stupid and self-destructive was to get Yahoo to do it first.

This was quite a time. Yahoo was desperate and failing, a graveyard of promising acquisitions that were gutshot and left to bleed out right there on the public internet as the dueling princelings of Yahoo senior management performed a backstabbing Medici LARP that had them competing to see who could sabotage the others. Going into China was an act of desperation after the company was humiliated by Google’s vastly superior search. Watching Google copy Yahoo’s idiotic gambits was baffling.

Baffling at the time, that is. As time went by and Google slavishly copied other rivals, its pathology of insecurity revealed itself. Google repeatedly failed to make a popular “social” product, and as Facebook commanded an ever-larger share of the ad-market, Google made a full-court press to compete with it. The company made Google Plus integration a “key performance indictator” for every division, and the result was a bizarre morass of ill-starred “social” features in every Google product — products that billions of users relied on for high-stakes operations, which were suddenly festooned with “social” buttons that made no sense.

The G+ debacle was truly incredible: some G+ features and integrations were great and developed loyal followings, but these were overshadowed by the incoherent, top-down insistence of making Google a “social-first” company. When G+ collapsed, it totally imploded, and the useful parts of G+ that people had come to rely upon disappeared along with the stupid parts.

For anyone who lived through the G+ tragicomedy, Google’s pivot to Bard — a chatbot front-end for search results — is grimly familiar. It’s a real “die a hero or live long enough to become a villain moment.” Microsoft — the monopolist that was only stayed from strangling Google in its cradle by the trauma of its antitrust dragging — has transformed from a product-creation company to an acquisitions and operations company, and Google is right behind it.

Just last year, Google laid off 12,000 staffers to please a private-equity “activist investor” — in the same year, it declared a $70b stock buyback, extracting enough capital to pay those 12,000 Googlers’ salaries for the next 27 years. Google is a financial company with a sideline in adtech. It has to be: when your only successful path to growth requires access to the capital markets to fund anticompetitive acquisitions, you can’t afford to piss off the money-gods, even if you have a “dual share” structure that lets the founders outvote every other shareholder:

https://abc.xyz/investor/founders-letters/2004-ipo-letter/

ChatGPT and its imitators have all the hallmarks of a tech fad, and are truly the successor to last season’s web3 and cryptocurrency pump-and-dumps. One of the clearest and most inspiring critiques of chatbots comes from science fiction writer Ted Chiang, whose instant-classsic critique was called “ChatGPT Is a Blurry JPEG of the Web”:

https://www.newyorker.com/tech/annals-of-technology/chatgpt-is-a-blurry-jpeg-of-the-web

Chiang points out a key difference between the output of ChatGPT and human authors: a human author’s first draft is often an original idea, badly expressed, while the best ChatGPT can hope for is a competently expressed, unoriginal idea. ChatGPT is perfectly poised to improve on the SEO copypasta that legions of low-paid workers pump out in a bid to climb the Google search results.

Speaking of Chiang’s essay in this week’s episode of the This Machine Kills podcast, Jathan Sadowski expertly punctures the ChatGPT4 hype bubble, which holds that the next version of the chatbot will be so amazing that any critiques of the current technology will be rendered obsolete:

Sadowski notes that OpenAI’s engineers are going to enormous lengths to ensure that the next version won’t be trained on any of the output from ChatGPT3. This is a tell: if a large language model can produce materials that are as good as human-produced text, then why can’t the output of ChatGPT3 be used to create ChatGPT4?

Sadowski has a great term to describe this problem: “Habsburg AI.” Just as royal inbreeding produced a generation of supposed supermen who were incapable of reproducing themselves, so too will feeding a new model on the exhaust stream of the last one produce an ever-worsening gyre of tightly spiraling nonsense that eventually disappears up its own asshole.

If you’d like an essay-formatted version of this post to read or share, here’s a link to it on pluralistic.net, my surveillance-free, ad-free, tracker-free blog:

https://pluralistic.net/2023/02/16/tweedledumber/#easily-spooked

Image:

Cryteria (modified)

https://commons.wikimedia.org/wiki/File:HAL9000.svg

CC BY 3.0

https://creativecommons.org/licenses/by/3.0/deed.en

Cory Doctorow (craphound.com) is a science fiction author, activist, and blogger. He has a podcast, a newsletter, a Twitter feed, a Mastodon feed, and a Tumblr feed. He was born in Canada, became a British citizen and now lives in Burbank, California. His latest nonfiction book is Chokepoint Capitalism (with Rebecca Giblin), a book about artistic labor market and excessive buyer power. His latest novel for adults is Attack Surface. His latest short story collection is Radicalized. His latest picture book is Poesy the Monster Slayer. His latest YA novel is Pirate Cinema. His latest graphic novel is In Real Life. His forthcoming books include Red Team Blues, a noir thriller about cryptocurrency, corruption and money-laundering (Tor, 2023); and The Lost Cause, a utopian post-GND novel about truth and reconciliation with white nationalist militias (Tor, 2023).